1)When to use kafka:

Use Kafka for real-time data streaming, message queuing, and event-driven architectures.

Key features of Kafka include:

- Distributed messaging system

- High throughput and low latency

- Fault-tolerance and scalability

- Persistent storage

- Stream processing capabilities

- Integration with various programming languages and frameworks

- message queuing refers to the process of sending, storing, and managing messages in a queue, where messages are temporarily held until they are processed by a consumer. In Kafka, message queuing involves publishing messages to topics (queues) and allowing multiple consumers to subscribe to these topics to process the messages asynchronously. This decouples producers of data from consumers, enabling efficient communication and scalability in distributed systems.

- Brokers:

When I started learning Node.js and became familiar with the event loop, I asked myself the question: In what phase is the promise fulfilled? I could not find an explicit answer to this question in the documentation.

The event loop in Node.js consists of several phases, each responsible for handling different types of asynchronous operations. Promises, which are a fundamental part of asynchronous programming in JavaScript, are executed in the corresponding phase of the event loop.

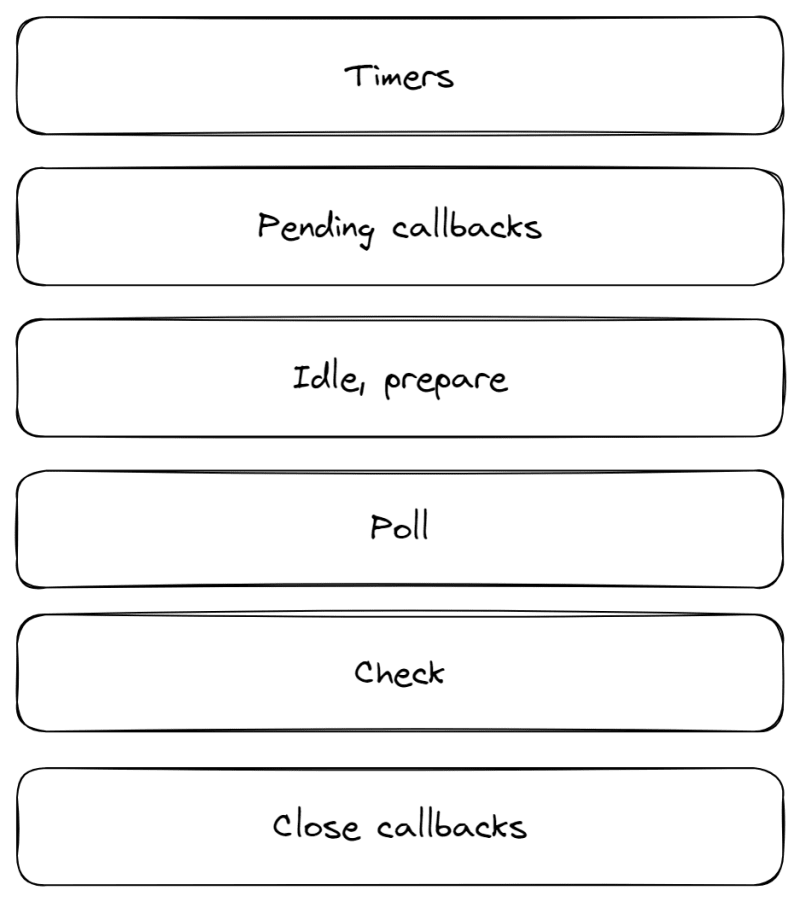

The diagram below shows the phases of the event loop:

Timers: In this phase, callbacks to scheduled timers are executed. These timers can be created with functions like setTimeout() or setInterval().

Pending callbacks: This phase performs callbacks for system operations. It includes callbacks for I/O events, network operations, or other asynchronous tasks that have completed but are waiting to be processed.

Idle, prepare: These phases are used internally by Node.js (libuv in particular) and are not managed directly.

Poll: The polling phase is responsible for timing and processing I/O events. It waits for new I/O events and calls them back. If there are no pending I/O events, it may block and wait for new events to arrive.

Check: This phase handles callbacks scheduled with setImmediate(). It makes immediate callbacks immediately after the polling phase, regardless of whether the polling phase was active or blocked.

Close callbacks: In this phase, callbacks associated with "close" events are executed. For example, when a socket or file is closed, the corresponding close event callback is executed in this phase.

So, what about promises?

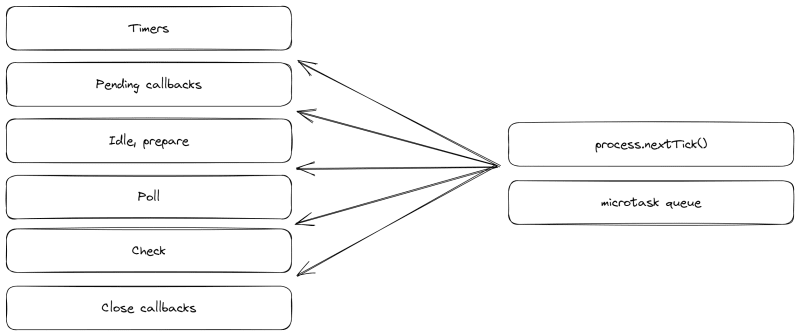

While the main phases were mentioned earlier, it is important to note that there are other tasks that occur between each of these phases. These tasks include process.nextTick() and the microtask queue (which is where promises appear).

Our schema now looks like this:

The microtask queue is processed as soon as the current phase of the event loop ends, before moving on to the next phase. This ensures that promise callbacks are completed as quickly as possible without waiting for the next iteration of the event loop.

If you, like me, have been looking for an answer to this question, I hope this article has given you the clarity you were looking for. Understanding when promises are executed in the Node.js event loop is essential to effective asynchronous programming. I hope this explanation was helpful to you, allowing you to use promises confidently in your Node.js applications.

Comments

Post a Comment